We are starting a monumental shift from purchasing software tools to purchasing labor (zero marginal cost labor). When I say “labor” I’m referring to complete jobs that no longer require a human. Most software companies today sell an incremental productivity lift in the form of a tool, not labor replacement. When AI replaces all the labor that makes up a job, one might expect the market price for that software to correspond to the cost of a salary. This massive change in how much companies can charge for a product has the potential to birth a few magical businesses with high margins, enormous revenues, and unique moats. The next question is how to identify them…

The $1T+ AI companies that emerge over the next decade will:

1.) Be built in very specific “mission critical” use cases

2.) Have AI systems with extremely high output accuracy (ie. 100-ε% where “ε” represents an arbitrarily small error).

3.) Have a defensible innovation enabling the 100-ε% accuracy

What is a “mission critical” use case?

A mission critical use case is one that requires 100-ε% accuracy to achieve a meaningful productivity gain. These use cases are best identified by the following three characteristics:

1.) High cost of error: There are large ramifications of inaccuracy (e.g. a bad medical decision mid-trauma surgery leads to death)

2.) Fast utilization speed: Model outputs must be deployed quickly, and there is only a small amount of time to review them. (e.g. A self-driving car moving at 80 mph)

3.) Complexity of review: Human review on a reasonable time scale is not realistic or ROI positive. (e.g. evaluating a 500-page AI-generated legal contract)

A well known example of a mission-critical use case is the self-driving car. For self-driving cars to achieve meaningful productivity gains, drivers must effectively become passengers, able to work on a laptop paying no attention to the task of safely driving.

In the self-driving example, there are large ramifications for inaccuracy including injury and death. Outputs must be deployed quickly, e.g. navigating a lane change at 80 mph. To achieve a good user experience and actual productivity gains, human review is not possible: if the driver monitors the system, it is not truly self-driving, and outputs have to be deployed too quickly to be flagged for review by a remote pilot.

On the other end of the spectrum, there are many non-mission-critical use cases, examples include platforms that generate images/videos for marketing. The cost of an error is low, there is plenty of time to review the output, and images/videos can be audited extremely fast by humans.

Why 100-ε% accuracy is important to be a $T+ company:

There are at least three reasons why 100-ε% accuracy is critical to building a $1T+ AI company:

Pricing Power:

There are thousands of AI companies being built right now in use cases where models with less than 100% accuracy are sufficient. If 100-ε% accuracy is not needed for a use case, tools from general foundation model companies like OpenAI/Anthropic/Midjourney/etc have made it fairly easy to build 99% accurate systems. As a result, the barriers to starting a non-mission-critical company are quite low. If you believe in an efficient market, over time these categories will become crowded and pricing power will erode. TLDR: if customers are perfectly happy with an AI solution that doesn’t have every single edge case worked out, it is likely not a candidate to be a $1T company.

Asymmetric productivity/value gain:

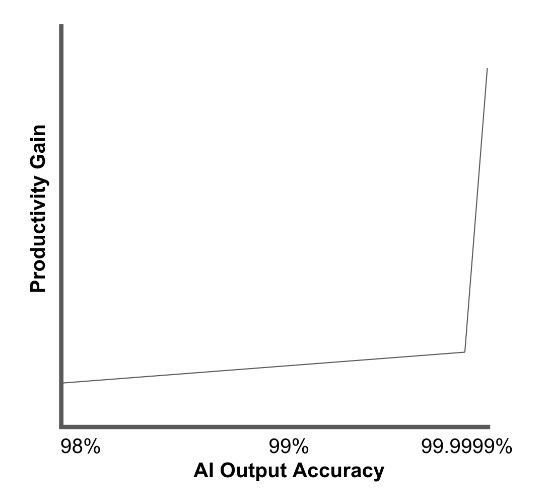

In mission-critical use cases, until 100-ε% accuracy is reached, a human must manually audit all the work being done by the AI. If a human needs to spend meaningful time scrutinizing the output, they lose all the productivity gain. It is important to note that if human output review is needed, you cannot command salary-level pricing for the software. From a business perspective, the potential revenue difference between a 99% and a 100-ε% accurate model is not 1% but also exponential. In simpler terms, the amount that can be charged for perfecting the last 1% is really large. (this concept is shown in the graphic below)

This graph shows that in any mission-critical use case, trust in the AI model is essentially binary.

Winner-take-all dynamic:

Given the exponential value difference between someone who has reached 100-ε% and someone below the threshold, all buyers will shift to the best solution. Unlike traditional software markets, where many solutions can gather market share, these mission-critical solutions are far more likely to become winner-take-all markets. The value/productivity difference is so asymmetric, it’s a no-brainer.

Companies with less than 100-ε% accuracy aren’t bad business, they’re just not candidates for labor replacement and can’t command human salary level pricing. Without human salary level pricing it is very hard to be worth $1T+.

Why the innovation enabling 100-ε% accuracy must be defensible:

The innovation(s) that enable an AI company to reach 100-ε% accuracy can come in many forms but are not all created equal. In a perfect world, the innovation either has a network effect associated with it or is patentable. A great example of this would be a proprietary network of hardware devices that continually provide unique data to train a new foundation model. There are many use cases out there that need substantially more data to achieve emergent behaviors. (robotic motion planning, untargeted molecular annotation, etc..) The companies that successfully build data collection networks to unlock these kinds of use cases are extremely well positioned. Examples of existing data collection networks include companies like Tesla and Hivemapper.

When a company successfully leverages a defensible innovation to achieve 100-ε% accuracy, they can start earning large returns so quickly, that it’s almost impossible to catch up.

At the other end of the defensibility spectrum, is something like a new open-source embedding model. An innovation like this is extremely exciting but can be adopted by anyone and can’t serve as a long-term moat. Even if the new open-source embedding model enables a company to achieve 100-ε%, the innovation is available to everyone in the market.

How do we get to 100-ε%?:

Improvements in model architectures, synthetic data, and hardware will all make high accuracy possible but are just a piece of the answer. The other key innovation will come from well-engineered “systems” of models. When humans need to ensure 100-ε% accuracy they don’t just rely on one human brain. They build systems of checks and balances to aid imperfect processes and reduce the frequency of errors. The same thing will happen for mission-critical AI use cases.

I foresee a world where products will offer classifier redundancy, reasoning, and testing all in one low-latency package uniquely designed for one specific application. Inputs will be interpreted by multiple diverse models, the results compared and reviewed by other models, and the conclusions pressure tested in simulation.

These types of systems aren’t yet practical in January of 2024 but further innovations in cost reduction, latency, power consumption, and model architecture are emerging rapidly.

Over the next 10 years, we’re going to see a few magical businesses that achieve 100-ε% output accuracy in mission-critical use cases. These systems will be hired by humans and companies to replace historically large labor spend categories. Contract sizes will be justifiably large, and revenues will balloon. I am most excited about investing in these businesses and the infrastructure that makes them possible. If you believe you’re building in this area drop me a note at, parker@pillar.vc.

Thank you to my colleagues at Pillar VC for their help with this piece.

Leave a comment